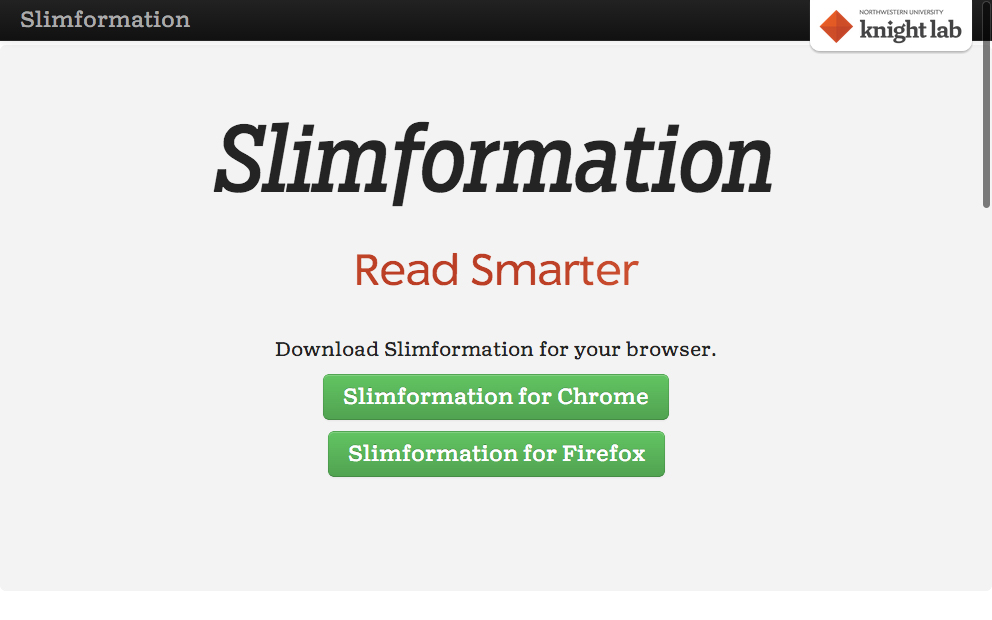

We've made some updates to Slimformation which include a number of technical updates and a Firefox add-on (the first version was built as a Chrome extension).

Slimformation is a prototype for a tool to help readers to track and improve their reading habits. After installation and, say, a week of reading via the browser, a reader can see how much time they have spent reading in different categories of content. The tool shows the sources and allows readers to set their own information consumption goals, like how much time they would like to be reading in each category, and it tracks if the goals are met. The system attempts to also track reading levels of the sources.

The original concept for the tool was germinated at last year's Mozilla Festival during the election-hacking session. The current version includes some refactoring by Lab developer Scott Bradley, with input from Joe Germuska and myself. This spring, Katie Zhu wrote about the first pass at Slimformation that she and her team developed in the joint projects class in technology and journalism.

It’s safe to say that this project is a work in progress, so please pardon our dust. Over the next several months, we’ll be thinking about ways to extend this technology by tuning the categorizer as well as improving the usability of the tool. We are also interested in exploring other areas of analyzing what you read beyond topical categorization. Finally, we have been thinking about the trade-offs between privacy and power in a system which compared people's reading habits.

That said, we want people to install the prototype and send us thoughts and input to knightlab@northwestern.edu. We want to know what works for you and what doesn’t, and what is or might be useful.