In April, Washington Post announced that it had set a new single-month traffic record, with more than 52 million unique visitors. The figure represented not only a new record, but also a 65 percent year-over-year gain that led other big-name publishers, according to the Post.

Publisher Frederick J. Ryan praised the addition of new editorial staffers and awards, and then called special attention to engagement: While unique visitors were up 65 percent, pageviews were up 109 percent year-over-year.

The note on engagement struck me. I’d recently found myself clicking through story after story on the Washington Post site, primarily through the Post Recommends section at the bottom of each article. The accuracy of the recommendations was uncanny and so the experience stuck with me.

Then, at NICAR, I heard about a new technology that helps power Post Recommends, Clavis, and set out to learn how it works.

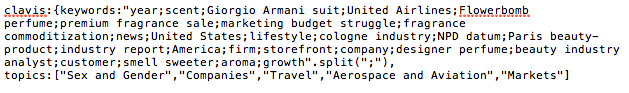

In a nutshell, Clavis is technology that figures out what stories are about, categorizes them by topic, and assigns each a series of keywords. It runs that same process on the Post’s readers and identifies their presumed interests based on stories they’ve read. Clavis then pairs readers with stories that match their reading history.

Clavis is just one part of the larger Post Recommends system and has helped the Post improve its click through rate on Post Recommends 95 percent since last June. Clavis also offers a good look at the process behind a recommender build (including natural language processing) and the challenges and opportunities news organizations face when building one.

Building Clavis

Work on Clavis began about a year ago with a brainstorming session between developer Gary Fenstamaker and digital product analyst Angela Wong. At the time the two only sought to build a story classifier that would help automate the process of adding SEO keywords to stories, but the technology they developed eventually became a full recommender.

Despite the tech savvy of the builders, Fenstamaker and Wong started the project with the rather low-tech strategy of talking to section editors about topics the Washington Post covers.News organizations are finally able to do something with all the words that they’ve written and published over the years.

The challenge was to create a list of topics that would be thorough enough that every Post story could easily be assigned a variety of topics, but flexible enough that the system would be able to adapt to new coverage areas over many years.

“[We] wanted to build something foundational that wouldn’t shift around a lot,” Wong said.

That meant that some topics that seemed like obvious candidates for inclusion — the Iraq War for example — would be dropped for something more general.

“We didn’t want ‘Al Qaeda,’” Fenstamaker said. “We wanted ‘terrorism groups.’”

The logic was that while Al Qaeda would eventually fade from the news, the general topics of terrorism and terrorism groups are likely to remain.

The pair eventually settled on a list of about 130 topics that could be assigned to each one of the more than 700 stories the Post publishes each day — a task that is easier said than done.

“It’s hard to teach a machine to understand words,” Wong said. “How are you going to teach a computer about why this (particular) is so significant?”

While it’s simple enough to tell a computer make a list of the all the words in a story and perhaps provide a sum of each, how do you teach it to ignore “the,” “this,” and “good,” which are very common, but aren’t very telling about a story’s main ideas?

The answer for the Washington Post team was in a set of common machine learning algorithms.

Mapping new articles

Like other content-based recommenders, Clavis maps articles by running them through a term-frequency, inverse document frequency (tf-idf) algorithm to figure out which words in a story are important, and thus a likely indicator of what the story is about.

For the non-nerds among us, tf-idf basically counts how many times a word appears in an article and compares that number to the number of times it appears in other stories in a given set. The fewer articles a word appears in, the more importance it is assigned in the current article.

In addition to the standard tf-idf algorithm, Clavis also adds extra weight proper nouns and more weight still if those nouns they appear earlier in a story, Fenstamaker said.

Every time the Post publishes a new story, Clavis is notified and then processes the story. To lighten the load on systems, it starts recommending a new batch of stories once an hour.

Mapping Users

Assigning topics to stories is only half of what Clavis does. The technology also classifies readers in a similar fashion, assigning each reader topics and a group of keywords based on the stories they read.

The site’s registered users are obviously easier to assign topics to, but the Washington Post can recommend relevant content to readers who only visit occasionally by using cookies.

“It’s definitely harder with anonymous users,” Fenstamaker said, acknowledging that relying on cookies is “highly volatile” and that the Post’s picture of unregistered users decays over time as people’s interests change.

A hybrid approach

Clavis is what is known as a content-based recommendation engine, which means it looks at the content of a piece of media (or metadata associated with it), figures out what the content is about and then recommends similar pieces of media. By way of example, Pandora uses a content-based recommendation engine and plays songs for uses based on their similarity to songs it knows they like.

The other primary type of recommendation engine is a collaborative filter, which assumes that two users who have read similar stories in the past will read similar stories in the future and forms a recommendation based on that history. A collaborative filter requires a fair amount of data, but doesn’t require the algorithm to “understand” anything about the thing it’s recommending. The most well known example of a collaborative filter is likely Amazon’s “People who viewed this item also viewed this item” recommendation.

Each approach has specific strengths and weaknesses. A collaborative filter, for example, faces what’s known as the “cold start” problem — meaning that it requires a history and data to learn from. In the news business, that’s a problem because new stories are published constantly and a machine takes too long to collect data on a new story.

On the other hand, collaborative filters don’t have to know anything about what a piece of media is about to make decent recommendations — an attribute that is particularly helpful when it comes to things that are difficult for a machine to understand like a video, photo, or piece of audio.

The Washington Post ended up relying on a hybrid system to power Post Recommends, said Sam Han, the Post’s director of big data and personalization. That system takes into account both personalization (Clavis) and popularity (collaborative filtering).

Custom recommender vs. vendor

While the Post and a few other publications have had some success analyzing data and recommending content, it’s a difficult thing for most organizations to do.

The Post has an entire data and personalization team and content recommendation is one of its primary projects, said Wong.

“It’s very expensive to do all this,” Wong said. Naturally many traditional publications won’t have the resources to devote to personalization and recommendation.

“There is so much information to collect, that I don’t know that a lot of news organizations are ready to collect, analyze, and act on it,” she said.

Most publishers turn to a third-party solution like Outbrain or Taboola to keep users clicking.

But for those publishers with the ability to collect and understand data and then make smart recommenders, it can add significant value.

“News organizations are finally able to do something with all the words that they’ve written and published over the years,” Wong said.

About the author