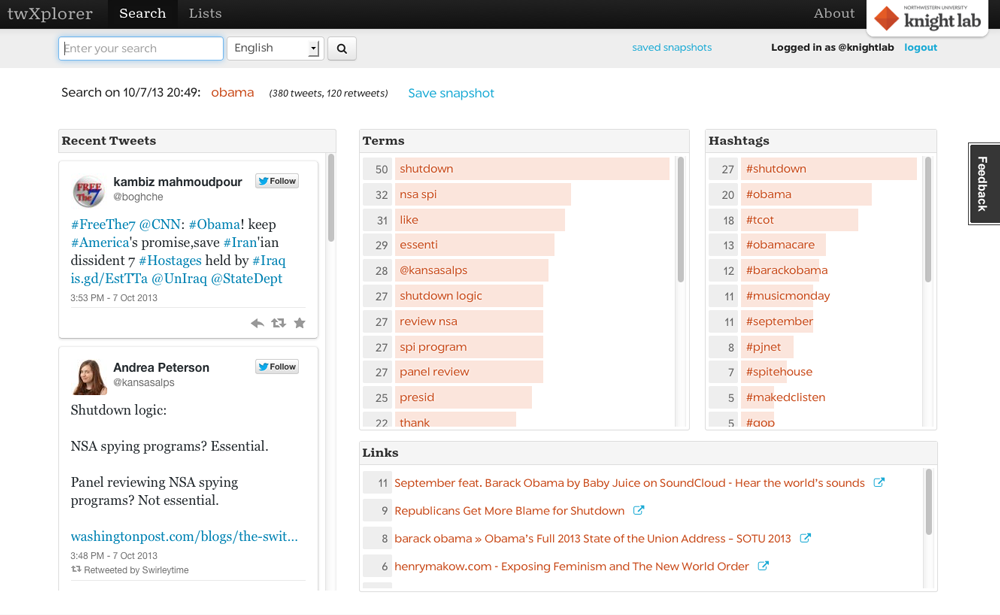

Just over two weeks ago we launched twXplorer, a tool to help people make sense of searches and find interesting conversations on Twitter.

When we launched the tool we didn’t know how it would be received or what use people would find for it. So far, we've been pretty happy to have more than 13,000 people use twXplorer and to get a few kind words from The Atlantic (“control your own little battalion of news-finding bots”), The Buttry Diary ("Twitter search just got waaaay better"), All Twitter, and Spain’s RedAssociales.

Kind words are great, of course, but nothing beats honest feedback. If you've used the tool and have some ideas or special use cases that twXplorer might address, we'd love to hear from you. We're likely to make a few tweaks to twXplorer and your feedback will help us determine which direction to take. Drop us a line at KnightLab@northwestern.edu.

In the meantime, a few of the features requested so far:

- Search date or time range. This is a common request, but unfortunately it's not something we can implement for the simple reason that Twitter's API doesn't support it. A common related question had to do with the number of tweets examined. For now twXplorer looks the most recent 500 tweets that contain the term you searched for.

- Search by location. Currently twXplorer collects tweets that contain the term you searched for without regard for location. We’ve learned from other projects — specifically NeighborhoodBuzz — that relatively few people geotag tweets, which makes a meaningful analysis difficult. On top of that the search API only supports search by a radius around a point, which is not how most people think about doing searches.

- An API. There’s some interest in an API. No word on what aspect of twXplorer is most intriguing, but we’re reaching out to folks who use the tool and will learn more as we do.

- Integration with Storify. Some people want to Storify tweets directly from twXplorer.

- Sentiment analysis.

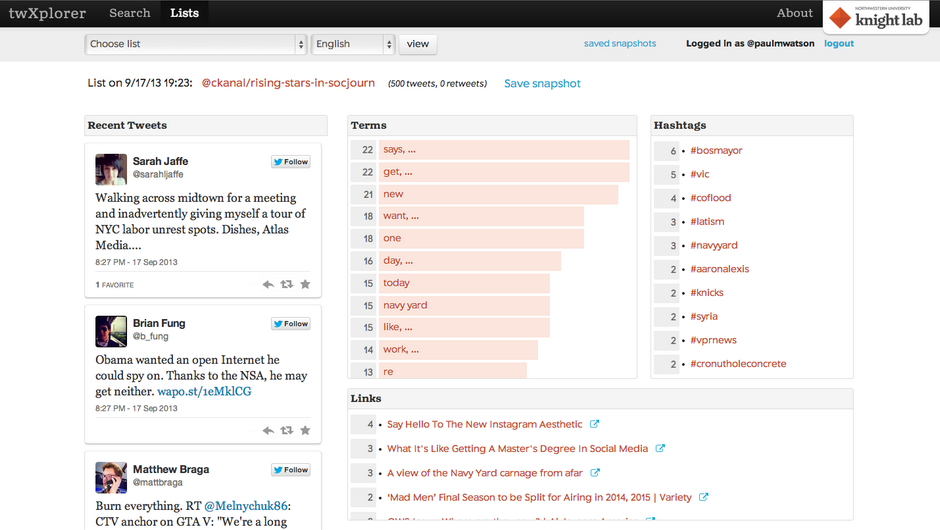

- A better block list. Paul Watson at Storyful looked at the results of a Twitter list analysis and noted that the list of most frequent terms was pretty weak, including words like “says” “get” “new” and “want” among the top five.

- Analysis over time. In a slight twist from the first bullet in this list, one user wanted to capture the most common hashtags used by her twitter lists over the course of a week. Using the twXplorer as it’s currently configured she could achieve the same result by taking a snapshot a few times each day, but that’s an awful lot of work. Maybe scheduling searches is useful?

- Download search results/analysis as JSON.

- Visualizations across snapshots and permalinks to snapshots.

- Better handling of trigrams. Those of us who are new to computational linguistics are getting a light vocabulary lesson. We’ve got unigrams (single words), bigrams (two-word phrases) and trigrams (three-word phrase). Some folks want us to do a better job with these longer phrases.

- Facebook equivalent?

Again, that's a pretty long list and we'll be digging deeper over the next few weeks to learn more about how people actually use the tool.

Remember, if you're a twXplorer user, you can help shape its future. What would make the tool really useful for you? What part of Twitter search is the most painful to deal with, either on Twitter itself or on twXplorer? What do you consistently find yourself wanting when you search?

Have an answer? Drop us a line at knightlab@northwestern.edu.

About the author