As I recently wrote, last week Joe and I had the privilege to participate in the Hacks/Hackers Buenos Aires Media Party. We prepared a couple of talks and spoke to the group: mine was about the current state of Knight Lab, and Joe's was about the future of journalism. We also prepared and facilitated a workshop on designing tools for investigation.

We really like this workshop. Basically, since the beginning of the year we have been running various versions of this workshop for both our internal team at the Lab — when we need to think more critically about an idea or research area — and externally as a means to work with the journalism community. We like it because it simultaneously generates big ideas but also teaches proper thinking around technology projects.

The process explained simplistically, we begin the workshop by defining the theme of the discussion. At this event in Buenos Aires, we chose to focus on generating ideas around journalistic investigation, information gathering and research. We explain the workshop process as being a step-by-step series of brainstorms that will become more and more granular as we progress through each step in the process.

We try to focus the design process on solving specific problems for a specific kind of person. We call this abstract person our persona, and we begin by collectively generating the defining characteristics of that persona, writing them in a place that the entire group can see (like a wall, white board, chalkboard or projected on to a screen).

We break up into smaller groups and instruct each group to do some very high-level brainstorming for tool ideas and articulate some specific use-cases or situational scenarios.

After five or ten minutes, we regroup and someone from each team reports back the ideas that were generated. We write them out for everyone to see, and consolidate related ideas, resulting in a handful of possible solutions that could be designed further.

For the rest of the exercise, it is best to divide into groups of about five people. At this event, we had about 15 people who agreed to stick around for the full duration of the workshop — we insist on this level of commitment — so, we chose the three best ideas and asked for three people to volunteer as a captain for other interested attendees to gather around.

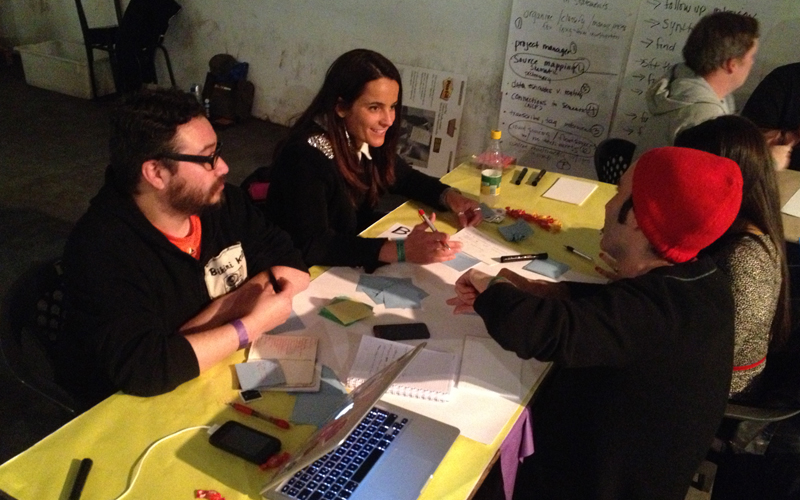

At this point we ask the groups to start generating all of the features for their tool. We ask them to think in terms of very basic tasks: a user would need to be able to input data manually, batch upload data, edit data, view data, export data, etc. Each feature is written on its own index card or post-it note. The group is instructed to think of every single feature that comes to mind and write them down, without focus on practicality or methods for execution.

Next, we ask the teams to group the cards into four areas: must-have features, should-have features, nice-to-haves, and "meh." (Sometimes for instructional workshops we leave out the "should-have" priority.) This part of the process is helpful in establishing the most fundamental features: the ones it absolutely must have in order for it to solve a given challenge, scenario or use-case. It forces the team to be think pragmatically and helps the individual team members clarify their intentions and expectations.

When we're applying this process to projects at the Lab, we also estimate the effort each feature will require and the degree of risk or uncertainty involved in implementing it. For workshops, there is rarely enough time, and also participants don't always have enough practical experience to do this step effectively, so we usually conclude by asking each group to report back with a three- to five-sentence summary of the idea and how it solves a specific use case for a defined user, plus a quick list of the necessary features that the tool must include for it to be viable.

Our Hacks/Hackers Buenos Aires Media Party workshop was a lot of fun and we were excited to see our friend Nuno Vargas running a design-thinking workshop as well. We had a great time and felt grateful to spend time with great journalists. The following are summaries from each of the three ideas:

A tool for sourcing information in places with limited access to technology

The group began designing a system that would help gather crowd-sourced information in places and communities with limited or low access to technology. They felt it had to have the ability to work offline, batch upload and download data, identify information providers (both the producer and the interviewed), capture audio/photos/video, capture timestamps and geolocation data, and easily create new forms. They felt it would be nice to have the ability to track progress, provide translation, retrieve data from databases, group/tag/score entries, provide basic data visualization and a preview mode.

Public reputation market

A public reputation market for journalists and sources which would allow a source to give a journalist feedback through evaluation. The idea was a CRM-style mechanism to centralize, rate, and track the sources available to a newsroom. The group liked the idea of an Airbnb-like 'rate my reporter' feature that would allow reporter's sources to rate or grade their interview experience. The system would notify the news organization's ombudsman if a journalist received a negative evaluation. The content within the system would include affiliations, subjects/topics, links and an ability to indicate whether the source was on- or off-the-record. A fully-featured system could include aggregate rating averages for a given news organization, in a sense allowing readers and sources to have a sense of trust-worthiness. It would have a source dashboard and contact tracker. It would correlate sources to any potential corrections from the news organization.

A system that attempts to optimize the investigation and research process

This ambitious team wanted a system that would save time for the researcher by doing suck things like helping to find new angles, surface relevant information and highlight sources. They were calling it "Investigation Optimizer" and seem to have defined the system around search. Some of its necessary features included a workflow that defined the kinds of data of interest, orders and prioritizes data, manages sources and quotes, an index of public data and social media, and a web interface. They reported that the it would be nice if the system also allowed for search filtering (like relevance, chronology, known sources, etc), topic grouping and structuring of results, ability to save previous search queries, and a highlighting feature for popular quotes and data sources.

Special thanks to Joe Germuska for his contribution to this writeup.

About the author