Over the weekend we released SoundCite, a tool that lets anyone easily include inline audio in their stories. We have open-sourced our code on GitHub and would encourage you to contribute in any way you wish. Fork it, send us pull requests or just let us know what you think.

The project has been a fun challenge for me personally and about 10 months in the making. Last year my professor, Jeremy Gilbert, offered a challenge to our Advanced Interactive and Design class for our final project. He told us to design the future of news, whatever that may be. Students prototyped interactive touchscreen kitchen tables, news sources that upped the ante on the future of curation and new ways to track events across a community. They were bold, grand ideas for an increasingly connected future of journalism.

I approached the project at a more granular level. I asked myself, “How can we tell our individual stories better in a digital age?” I decided that we needed to develop better ways to semantically attach pertinent media to our words. Storytelling on the web can combine visual and aural media with text in a way no other medium can. Given my love of music and all things audio, I chose to tackle bridging the gap between aural and textual media. The solution I arrived at was SoundCite.

My initial prototype was nothing more than a browser hack, but the core ideas were in place and still hold true with today's product. With SoundCite, a writer can take an audio source hosted on SoundCloud, select a start and end point, and create an audio clip that embeds neatly into text, much like a hyperlink. There is no external audio player, no interface and no distractions. SoundCite is a tool for unifying audio and text to tell your story better.

The prototype eventually made its way to the Knight Lab, and for the past nine months, we've been working hard to develop this idea into a fully capable product that any publisher can use. Our current alpha release is not quite there yet, but we hope you start testing it privately in your storytelling and thinking of ways that SoundCite can work for you as we continue improving towards a beta release. We have plenty of ideas to get you started.

Before I began programming, I fancied myself a music critic. Yet no matter how much I practiced the craft, I found it impossible to translate the sounds I heard into words that any reader would understand. Ironically, writing about the actual music was the hardest part of writing about music. If I could just point the reader to the snippet of music I wanted to discuss, I could write the parts I really wanted to write: the analysis.

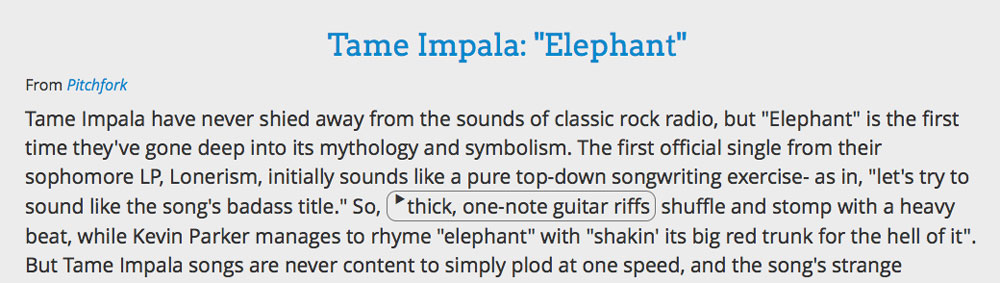

SoundCite works perfectly in this referential style. Indeed, its name derives from that point: the sounds you link to text are citations to the source you are referencing. Here's an example I adapted from a Pitchfork review of Tame Impala's 2012 single, “Elephant”:

This was my initial use case for SoundCite, but as we continued to develop the product, we discovered even more compelling use cases. The true power of using audio is the emotional depth it can bring to a story. When broadcast radio was introduced, families gathered around radios in their homes to hear the latest episode of Amos 'n' Andy or The Jack Benny Program. Instead of reading and imagining a character's voice, they could hear it. “Wait, Wait, Don't Tell Me!” exists today because of this power.

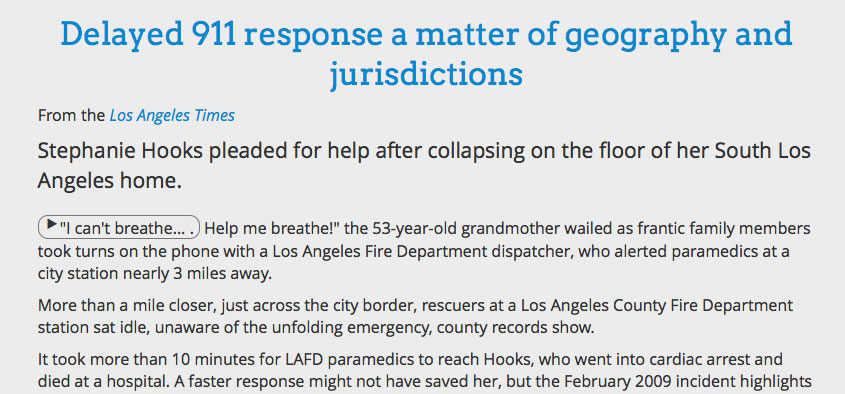

SoundCite can be used in this way to add emotional depth and voice to your stories. In the months I have been developing the product, I have come across countless stories where SoundCite would have served a story well, but none made me wish I had worked faster than the Los Angeles Times' feature on delayed 911 calls. Recognizing the power of the voices in these calls, they chose to upload a particular call to SoundCloud and embed the entire 11-minute call at the top of their story so readers could listen. Meanwhile, the writers referenced the call throughout the story, weaving their own narrative. Here's what SoundCite can do to make these two mediums work together:

Sometimes, the natural sound of an environment you are writing about is, much like music, impossible to describe, but to take the reader to that place, you as a writer must try. SoundCite not only solves that problem, but enhances the story to make a truly immersive experience.

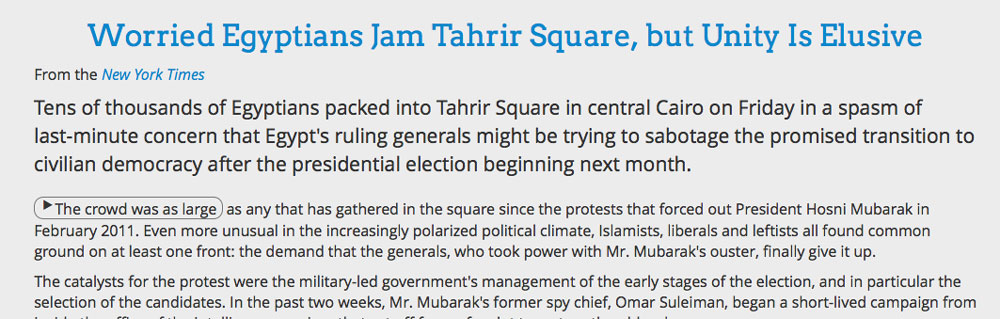

This goes beyond idyllic nature sounds for travel stories, though certainly, SoundCite can work for that style. The chaos of the Arab Spring left behind all kinds of multimedia artifacts that made the protests so much more vivid. With SoundCite, those primary source sounds can become tools for immersing readers into the scene. Here’s SoundCite applied to a New York Times story:

These are just a few of the many ways SoundCite can enhance a story. SoundCite can be used for building interactive transcripts during crucial Supreme Court hearings, fact-checking politicians against their own words, capturing a play-by-play call of a buzzer-beating three-point shot and so much more that we haven't even thought of yet.

SoundCite is still an alpha product, and certainly not ready to be publicly used in a story. We have many bugs to squash and features to add, including creating more than one clip at one time and customizing your SoundCite embed.

If you want to try it out, head over to our website and use our clip creator to generate the embed code! The process requires no technical experience beyond copying and pasting some pre-generated code into your post editor, just like a YouTube video.

Again, check out the code on GitHub feel free to contribute in any way you wish! Fork it, send us pull requests or just let us know what you think. We want all the feedback we can get as we build towards a beta release in the coming weeks. Stay tuned.

About the author